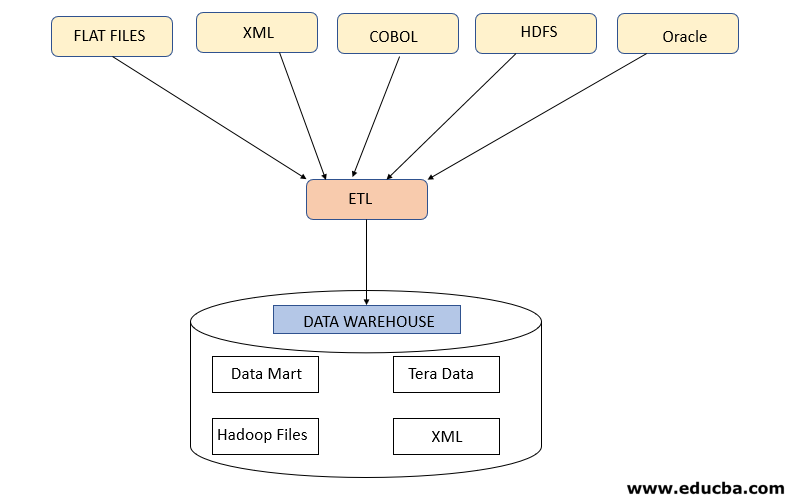

To extract data using Datorios, you will need to specify the source of the data and the connection details required to access it. You can use the Datorios tool to extract data from a variety of sources, such as relational databases (e.g., MySQL, Oracle, or PostgreSQL), non-relational databases (e.g., MongoDB or Cassandra), files (e.g., CSV, JSON, or Excel), and APIs (e.g., REST or SOAP). In ETL, the extract phase involves retrieving data from various sources, such as databases, files, or APIs. The extraction process using Datoriosĭatorios is a data integration and extraction tool that can be used as part of an ETL (Extract, Transform, Load) process. Still, it’s also possible for developers to create custom scripts for this purpose using languages like Python or Ruby on Rails. Physical extraction is typically done using third-party software or services. This query would return all emails for customers whose names matched ‘John Smith.’ It could help pull out specific data from more extensive storage in different formats. SELECT email FROM customers WHERE name=’John Smith’ You could then parse this string into individual pieces with another SQL query like this: This query would find all records where the name field contains ‘John Smith.’ It would return all records that match that criteria, including any other fields they might have, and then return them as one large string. SELECT * FROM customers WHERE name=’John Smith’ For example, you could use an SQL query like this: It can be done in many ways, depending on the data you’re trying to extract. Logical extraction involves extracting data from a source system based on rules or queries. You can do it in two ways: Logical and Physical. Logical and Physical ExtractionĮxtracting data from a source system is one of the steps in the ETL process. You should also validate the extracted data to ensure that it is accurate and complete. It is crucial to consider the performance and reliability of the extract phase, as well as any error handling and recovery mechanisms that may be needed. Store the data: The extracted data is typically stored in a staging area, such as a temporary file or database table, to be used in the next phase of the ETL process.Extract the data: Once the connection to the data source is established, you can extract the data using various methods, such as SQL queries, file-read operations, or API calls.Connect to the data sources: Connectors need to be sourced or developed to connect to your needed sources to retrieve data.Identify the data sources: Determine where all of the data you want to extract is being stored.There are several steps involved in the extract phase: The purpose of the extract phase is to retrieve the data from the source systems and make it available for transformation and loading into the target system. The data sources can be databases, files, web APIs, or other systems. In the extract phase of an ETL (extract, transform, and load) process, data is extracted from one or more sources. Breaking Down the ETL Process into Stages Extract The goal is to take data in a format that isn’t compatible with your desired destination and make it consistent, so you can use it there. It’s a set of steps that can be followed to move data. The ETL process is a way of getting data from one system to another. There are many ways to get your data from one place to another in the data warehouse world, but there’s only one way to do it right: with an ETL tool. It’s a crucial part of any data warehouse project because it ensures consistency and accuracy, which are critical elements in ensuring that a business’s decision-makers can use data effectively. Which is the easiest ETL tool to learn?Īn ETL tool is software that automates the process of extracting, transforming, and loading (hence the name) data from one source into another.Breaking Down the ETL Process into Stages.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed